News & Events

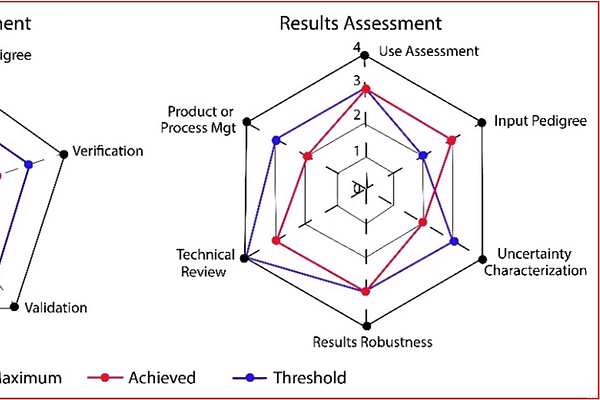

Compliance with ASME and NASA standards on verification, validation, and uncertainty quantification (VVUQ) is often taken as sufficient evidence that simulation results can be trusted. This is a mistake, however. Compliance alone does not answer the question that truly matters to decision-makers: Are the predictions reliable enough for the decision at hand?

AI is rapidly transforming engineering workflows. However, a fundamentally important issue rarely gets discussed: AI explanations are only as trustworthy as the simulations they rely on. This blog post explores the rigorous technical requirements that must be met to ensure that numerical simulations provide the transparent, machine-interpretable evidence that explainable AI demands.

Turtle Shells and Legacy Finite Element Codes: Evolutionary Constraints in the Age of Explainable AI

This blog post explains why legacy finite element codes are like a turtle’s carapace: a protective structure that provided a survival advantage in the resource-scarce 1960s but now impedes the flexibility needed to meet the demands of explainable AI (XAI). The good news is that the technology required to support XAI already exists.

Dynamic Results Mining.

Solution Verification.

Simulation Governance.

Democratization.

Serving the Numerical Simulation community since 1989

Serving the Numerical Simulation community since 1989