SAINT LOUIS, MISSOURI – December 5, 2017

In ESRD’s November S.A.F.E.R. Simulation post we summarized the business trends in A&D that are driving the need for higher-performing aerostructures that are more efficient, lighter-weight, and more durable and damage-tolerant over longer life spans. This in turn is driving the requirement for higher-fidelity engineering analysis that brings increased accuracy and reliability to the structural engineering function without adding more time and risk to the program schedule.

In this second of our multi-part series on “S.A.F.E.R. Numerical Simulation for Structural Analysis in the Aerospace Industry” we will distill what this means to stress analysis groups and the challenges experienced when using legacy simulation and analysis technologies based on the finite element method (FEM).

Aerospace & Defense budgets are squeezed ever tighter, yet simulation demands and complexities keep increasing…

The Democratization of Simulation

As discussed in our last post on the state of simulation in aerospace, the capabilities of FEA-based software tools have become increasingly more advanced in functionality and richer in features. Not surprising, they have also become more sophisticated to use and difficult to master, even by expert analysts. Training analysts in FEA-based simulation software is a laborious, expensive process, and the results are not always transferable as analysts move to new programs or employers which have their own set of tools, processes, and best practices.

Across the engineering software community there is much discussion about the democratization of simulation; meaning the reliable and routine use of numerical simulation software by non-simulation experts. These non-experts may be mechanical design engineers, occasional users, or new engineering graduates. The hope of democratization is that much of the complexity and risk of FEA-based simulation can be distilled out such that simulation-driven design may be performed with greater confidence by engineers earlier in the design cycle.

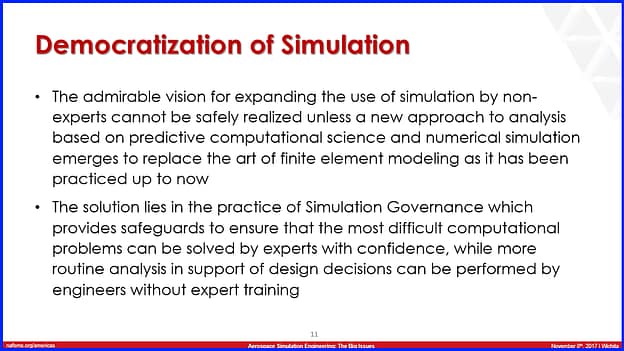

Excerpt from “The Role of Simulation Governance in the Democratization of Simulation Through Sim Apps in the A&D Industry” presented by ESRD’s CEO Dr. Ricardo Actis at NAFEMS 2017 Aerospace Simulation Engineering: The Big Issues

Indeed, democratization has great potential to compress the product development lifecycle, but is it a realistic objective for the demanding aviation, aerospace, and defense industries? The answer few may want to hear is that it will not be easy to accomplish using legacy FEA-based simulation technologies along with the software tools based upon these technologies.

The results when reviewing the previous attempts to put FEA tools into the hands of the non-expert have not been encouraging. These schemes included embedding solvers into CAD software to hide complexity, employing scripted templates to insulate users from making errors, and exercising wizards to automate processes. None of these have yet to move most FEA work off of the expert analyst’s desktop and place it into the hands of the design engineer. Upon closer inspection, most of these approaches failed not because they were bad ideas, but because they were still based on legacy FEA methodologies where creating, debugging, running, and post-processing finite element models was a complex error-prone art form for the expert.

Challenges with Legacy FEA Software

Simulation software providers have continually sought ways to compensate for – and in some cases hide – the inherent complexity of the finite element method (FEM) when applied to analysis problems in computational solid mechanics. There have certainly been many advancements in the functionality, user interfaces, pre/post processors, high-performance computing, delivery platforms, and licensing options of FEA software over recent years. Yet, none of these individually or collectively removed the intrinsic complexity and challenges of learning and performing FEA by either the expert or novice user.

There are many good reasons why this is so. The underlying theory and methods employed “under the hood” of nearly all FEA software products on the market today are in fact many decades old. As a result, there are near endless sources of assumptions, idealizations, approximations, manipulations and judgement calls, each that add complexity, time, and uncertainty to engineering simulations.

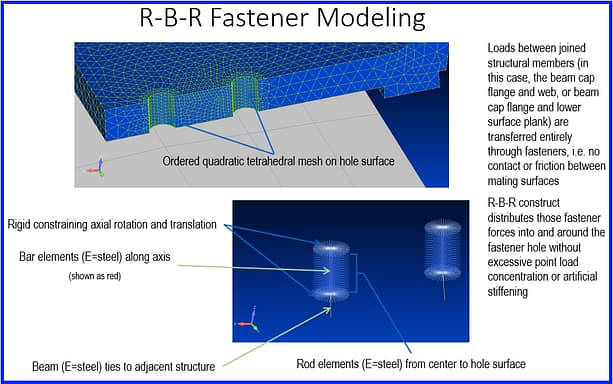

Example of loading fastener holes via Rigid Body Elements (RBE’s). Using RBE’s and other element libraries may be acceptable for expert users, but for non-experts they can be a source of unknown errors.

As an example, the element libraries of most FEA software products contain dozens of variants and odd mutants that must be carefully selected and deployed. When these overly-sensitive elements are used in fragile meshes it is not uncommon for a finite element model to break with even small changes to design geometry or boundary conditions. Rarely can the same elements and meshes be used for different types of physics modelling such as non-linear, contact, heat transfer, dynamics, or fracture analysis.

Often more time is spent in the pre-processing steps of constructing “bad” models, to finally arrive at the “good” ones, than in post-processing the results or optimizing a design. While CAD data is increasingly 3D solid-based, it must often be repaired, defeatured, re-created, or reduced before meshing. It is often unrealistic in legacy FEA simulations to use solid finite element models of large-spanning, multi-scale geometries of built-up components, which are common in aerostructures. Often a series of increasingly granular models must painstakingly be constructed to perform a sequence of multi-fidelity, multi-scale global/local analyses.

Extracting and validating results in traditional FEA is an equally laborious process that is inherently error-ridden. In legacy FEA software mathematical degrees of freedom are nodal based, which means quantities of interest at other locations must be interpolated, extrapolated or massaged in a way that potentially injects additional inaccuracies in engineering data. High-density meshes must be used in areas of stress gradients, which often requires a-priori knowledge of the results and locations of interest, or changing the model once the results are produced and then iterating. It is not uncommon that models are tuned and tweaked such that the computational results align with empirical test data.

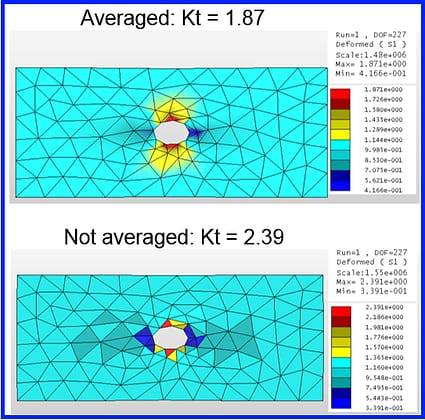

Averaged vs. Not Averaged results for Legacy FEA Simulation Technology. Which stress concentration (Kt) is more accurate, if either one? Is this the right mesh density?

All of the above limitations and challenges are so well understood by the expert analyst, who typically has advanced engineering degrees and many years of experience, that they rarely think twice about whether it has to be this complex. They know all the traps, fixes, tricks, and workarounds of finite element modelling. Yet, there is a more fundamental challenge often overlooked; legacy generation finite element methods do not provide a fool-proof measurement of the quality of their solutions. There is no inherent quality assurance, much less explicit support of solution verification. As such, it is up to the individual analyst to assess the applicability, accuracy, and completeness of the computed results. It is no wonder that it always takes an expert in the loop to determine if the results are good and more importantly when they are deficient.

It All Ends Up On The Engineer’s Desktop…

The confluence of demanding A&D business drivers, higher product performance requirements, and increasing complexity of digital simulation all end up on the structures engineer’s desk. The stress analyst on a modern A&D program ends up owning the burden to produce a larger volume of higher-fidelity analyses, earlier in the NPD cycle, spanning an expanded optimization solution space of structural design variations.

In doing so they are expected to create all-encompassing 3D digital models, with few details left behind to support virtual prototyping and reduce testing, while using more sophisticated tools that take longer to learn and master. And they are expected to perform these analyses in less time with a greater level of confidence in the results and with less tolerance for uncertainty or “fat” factors of safety that were once acceptable in yesteryear’s aerostructure designs.

Compounding the above pressures, today’s analysts may no longer have access to internal support from engineering methods groups which historically provided training, troubleshooted problems, captured institutional knowledge, and shared best practices. It is little surprise that industry associations like NAFEMS report that providing oversight of the simulation function through the practice of Simulation Governance is one of “The Big Issues” for engineering managers who often see their simulation teams struggle to deliver with so many conflicting requirements.

These pressures are not letting up, and current trends do not appear sustainable. The evidence speaks for itself that FEA-based structural analysis often adds so much more time and complexity to engineering processes such that project managers seek to minimize its use when there are other faster methods available. Fortunately, a new generation of simulation software is emerging where that is no longer the case.

Coming Up Next…

In Part 3 of this series we will explain why Numerical Simulation is not the same as Finite Element Modeling and what this means to engineering analysis within the A&D industry. We will describe how the practice of Simulation Governance, enabled by the next generation of software based on numerical simulation, is helping engineering groups respond to an avalanche of complexity in products, processes, and tools.

In our final segment we’ll profile the capabilities of ESRD’s numerical simulation software StressCheck™ and Smart Sim Apps deployed in a Digital CAE Handbook built using StressCheck. Finally, we’ll share use-case examples from A&D that document the benefits to engineers and value to their programs from the use of this newer generation of analysis software that is Simple, Accurate, Fast, Efficient, and Reliable – S.A.F.E.R – for both the expert and non-expert user alike.

Next Week’s Webinar…

On Thursday, December 14th @ 1:00 pm EST an Aerospace & Defense-oriented webinar titled “High-Fidelity Stress Analysis for S.A.F.E.R. Structural Simulation Webinar” will be provided by ESRD’s Brent Lancaster and Gordon Lehman.

Sign Up for Next Week’s High-Fidelity Stress Analysis Webinar

Serving the Numerical Simulation community since 1989

Serving the Numerical Simulation community since 1989

Leave a Reply

We appreciate your feedback!

You must be logged in to post a comment.