By Dr. Barna Szabó

Engineering Software Research and Development, Inc.

St. Louis, Missouri USA

The American Society of Mechanical Engineers (ASME) and the National Aeronautics and Space Administration (NASA) have published standards for verification, validation, and uncertainty quantification (VVUQ) [1–6]. These standards emphasize model credibility rather than decision-grade reliability. Consequently, compliance with them does not answer the question that matters most to decision-makers: Are the predictions reliable enough for the decisions at hand?

Credibility vs Reliability

Credibility, as defined in these standards, is process-based: it requires evidence that appropriate verification, validation, and uncertainty quantification activities were performed for a given application. Reliability, by contrast, is outcome-based: it concerns the accuracy of predictions relative to specific quantities of interest (QoI). As a result, a model may fully comply with ASME and NASA standards yet still produce results that lack decision-specific error metrics.

Typical Use Cases

The following use cases illustrate the critical importance of decision‑grade reliability in model predictions.

- Given a spacecraft, a set of design rules, and a mission characterized by a set of load cases, report all margins of safety within a 3% error tolerance.

- An aerospace corporation is planning to use an advanced composite material system to design a new airframe. Lacking prior experience with this material, new design rules must be developed. This will require the development of a mathematical model capable of predicting the onset of failure in structural components under various loading conditions.

- A marine helicopter was damaged in a war zone. The commanding officer must decide whether to destroy the aircraft or have it flown to a repair facility 250 miles away. To make that decision, the officer needs to know the probability of catastrophic failure under these conditions.

In the first use case, it must be demonstrated that the margins of safety are computed with sufficient accuracy, and the decision-maker must be provided with evidence that the reported margins lie within acceptable error bounds. In this context, the reliability metric is the estimated relative error in the margins of safety, represented as a set of deterministic values. Because loading conditions apply to the spacecraft as a whole, while strength requirements must be satisfied at the level of structural details, this verification problem involves multiscale analysis.

In the second and third use cases, the reliability metric is stochastic, with parameters that characterize the probability density functions of the quantities of interest. These use cases involve model development.

In a model development project, three sources of error must be controlled: model‑form errors, numerical approximation errors, and calibration errors. Model development projects are typically open-ended because experimental information accumulates over time and new ideas for improvement may be proposed at any time, necessitating updates. It is the management’s responsibility to create favorable conditions for the evolutionary development of mathematical models.

An essential attribute of a mathematical model is the set of restrictions arising from the assumptions incorporated in its formulation, together with the limitations imposed on calibrated model parameters by the availability of experimental data. Collectively, these restrictions and limitations define the domain of calibration (DoC). A model is validated within its domain of calibration.

Classification

Model development in the engineering and applied sciences is addressed in [7], where a model development project is classified as progressive if the DoC is increasing, stagnant if the DoC is not increasing, and improper if the formulation contains inconsistencies or if the problem‑solving method lacks the capability to estimate and control numerical approximation errors in the quantities of interest. Based on these criteria, the large majority of past and present model development projects are, unfortunately, improper.

Predictions are reliable only when the model formulation is proper, and the input data and calibrated parameters lie within the DoC. A key objective of model development is to ensure that the DoC is sufficiently large to encompass all intended applications of the model.

Assurance of Reliability: Technical Requirements

Solution verification, that is, the estimation and control of approximation errors, is required in all three use cases. This is possible only if the mathematical problem has a unique exact solution and the quantities of interest have finite values. In problems of linear elasticity, this means, for example, that point constraints and sharp corners are not permitted if the maximum stress is of interest.

To estimate the relative error in a QoI, we need a converging sequence of finite element solutions. The QoI, computed from the finite element solutions, will converge to a limit value. That limit value is the estimate of the exact solution. Converging sequences can be obtained by mesh refinement or increasing the polynomial degrees of elements. The latter method is more efficient, however [8].

Use cases 2 and 3 involve formulating, calibrating, and validating a mathematical model. It is useful to view a particular mathematical model as a special case of a more comprehensive model. For example, a model based on linear elasticity is a special case of a model that accounts for large displacements, which in turn is a special case of a model that also accounts for material nonlinearities. Since boundary conditions and material behavior can be characterized in many different ways, model hierarchies have many branches. This provides great flexibility, but at the cost of having to select the simplest model that accounts for all phenomena that significantly affect the quantity of interest. A mathematical model is reliable if it is properly formulated and operated within its DoC.

What the NASA VVUQ Standards Require

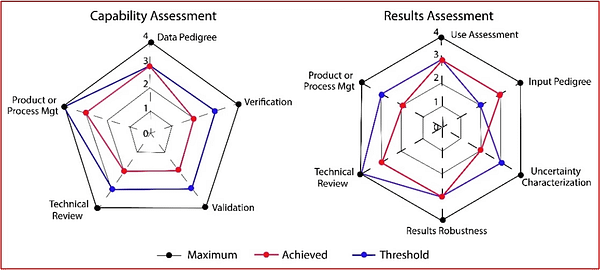

NASA-STD-7009B [5-6] requires reporting credibility status in two categories: Capability Assessment and Results Assessment, using a scale of 0 to 4. A recommended reporting format is spider charts, as illustrated below. These charts provide ordinal assessments of process maturity but do not quantify decision-specific prediction errors or measures of uncertainty.

Simulation Governance is Essential

For decision-makers, the distinction between credibility and reliability is not a semantic nuance—it is a critical requirement for safety and project viability. Relying on the illusion of trustworthiness created by compliance with standards carries inherent risks. By understanding the limitations imposed by the domain of calibration and focusing on the accuracy of the QoIs, organizations can move beyond the “black box” mentality and base their decisions on computed information whose reliability is supported by solid evidence.

This will require exercising simulation governance—that is, command and control over all aspects of numerical simulation. First and foremost, it should be recognized that legacy finite element codes were not designed with reliability in mind. Consequently, organizations must establish clear technical criteria for evidence-based reporting of numerical simulation results and mandate that software providers demonstrate compliance with these standards [9].

Takeaway

Reliability is achieved through rigorous, disciplined, and evidence-based engineering practices. Currently, compliance with published VVUQ standards is often taken as sufficient evidence that simulation results can be trusted. This is a mistake, however. Compliance alone does not answer the question that truly matters to decision-makers: Are the predictions reliable enough for the decision at hand? Requiring procedural credibility rather than decision-specific error metrics creates an illusion of trustworthiness — one that can mask unacceptable risks in high-consequence engineering decisions.

References

[1] Verification, Validation, and Uncertainty Quantification Terminology in Computational Modeling and Simulation. ASME VVUQ 1-2022 [2] Standard for Verification and Validation in Computational Solid Mechanics. ASME V&V 10-2019 (R2025) [3] Standard for Verification and Validation in Computational Fluid Dynamics and Heat Transfer. ASME V&V 20-2009 (R2021) [4] Assessing Credibility of Computational Modeling Through Verification and Validation: Application to Medical Devices ASME V&V 40-2018 [5] Standard for Models and Simulations. NASA-STD-7009B, 2024. [6] NASA Handbook for Models and Simulations: An Implementation Guide for NASA-STD-7009B, 2026. [7] Szabó, B. and Actis, R. The demarcation problem in the applied sciences. Computers & Mathematics with Applications, 162, pp. 206-214, 2024. [8] Szabó, B. and Babuška, I. Finite Element Analysis. Method, Verification and Validation. John Wiley & Sons Inc. Hoboken, NJ, 2021. [9] Szabó, B. and Actis, R. Simulation governance: Technical requirements for mechanical design. Computer Methods in Applied Mechanics and Engineering, 249, pp. 158-168, 2012.Related Blog Posts:

- A Memo from the 5th Century BC

- Obstacles to Progress

- Why Finite Element Modeling is Not Numerical Simulation?

- XAI Will Force Clear Thinking About the Nature of Mathematical Models

- The Story of the P-version in a Nutshell

- Why Worry About Singularities?

- Questions About Singularities

- A Low-Hanging Fruit: Smart Engineering Simulation Applications

- The Demarcation Problem in the Engineering Sciences

- Model Development in the Engineering Sciences

- Certification by Analysis (CbA) – Are We There Yet?

- Not All Models Are Wrong

- Digital Twins

- Digital Transformation

- Simulation Governance

- Variational Crimes

- The Kuhn Cycle in the Engineering Sciences

- Finite Element Libraries: Mixing the “What” with the “How”

- A Critique of the World Wide Failure Exercise

- Meshless Methods

- Isogeometric Analysis (IGA)

- Chaos in the Brickyard Revisited

- Why Is Solution Verification Necessary?

- Variational Crimes and Refloating the Costa Concordia

- Lessons From a Failed Model Development Project

- Where Do You Get the Courage to Sign the Blueprint?

- Great Expectations: Agentic AI in Mechanical Engineering

- The Differences Between Calibration and Tuning

- Honored in the Breach

- Remembering Ivo Babuška

- Turtle Shells and Legacy Finite Element Codes: Evolutionary Constraints in the Age of Explainable AI

- Beyond the Black Box: Explainable AI Requires Explainable Simulation

Serving the Numerical Simulation community since 1989

Serving the Numerical Simulation community since 1989

Leave a Reply

We appreciate your feedback!

You must be logged in to post a comment.